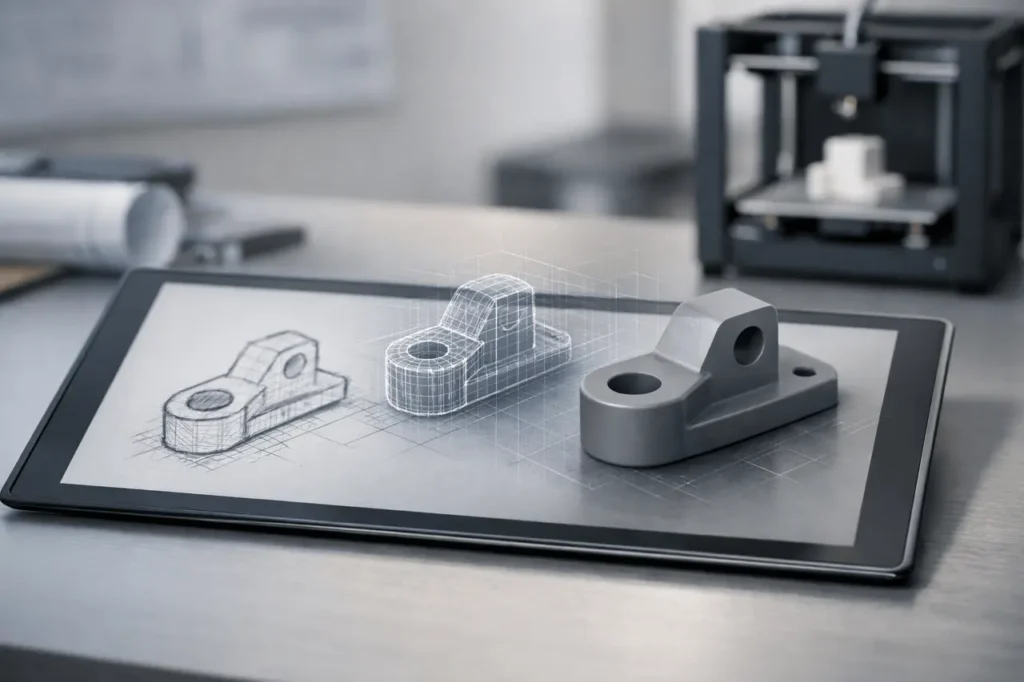

The upgraded Gemini 3 Deep Think mode can now convert hand-drawn sketches into 3D-printable files, expanding its role beyond advanced reasoning into real-world prototyping.

Google has announced a major upgrade to its highly specialized algorithmic reasoning system, Gemini 3 Deep Think, which allows users to convert hand-drawn sketches into 3D models that can be printed. The new feature is available to Google AI Ultra subscribers starting today.

The update enhances Gemini’s capabilities, moving beyond logic-based text and code generation into physical design workflows, allowing engineers, researchers, and product developers to shift from concept sketch to printed object in a single AI workspace.

The company states that users can select “Deep Thought” or “Deep Consider” from the tools menu to access the new capabilities.

What Happened?

The recently updated Gemini 3 Deep Thinking provides an algorithm that takes sketches, examines their geometric structure, and generates a 3D design and the appropriate file for 3D printing.

In terms of practicality, it refers to:

- An individual uploads drawings and sketches.

- Deep Think interprets the drawing.

- The system creates structured 3D models.

- It generates a 3D print-ready file.

Google describes the technology as designed for practical use, particularly for those studying complex data and engineers constructing physical systems with code.

This upgrade is currently only available to Google AI Ultra members, Google’s AI premium tier.

What Is Gemini 3 Deep Think?

Gemini is Google’s top multimodal AI system, developed by Google and its AI research division, Google DeepMind. Gemini competes directly with advanced reasoning models developed by OpenAI and Anthropic.

The “Deep Think” mode in Gemini is advertised as a special reasoning configuration that is optimized to:

- Multiple-step reasoning

- Scientific modeling

- Simulations based on code

- A complex mathematical understanding

The research focuses mainly on computational and cognitive aspects: tackling difficult reasoning problems, creating structured code, and analysing the layers of datasets.

In this version, Google pushes Deep Think into the realm of hybrids, combining reasoning, geometric interpretation, and fabrication-ready output.

Why This Matters?

The introduction of sketch-to-3D capabilities is a sign of three wider changes in the field of generative AI advancement:

1. AI Is Moving From Ideas to Fabrication

The majority of AI systems today produce images, text, or even software. Making an unstructured 2D drawing into a printed, structured 3D model requires:

- Spatial reasoning

- Geometry reconstruction

- Mesh, or parametric modeling

- Export formatting is compatible with 3D printers

This bridges digital innovation with physical manufacture.

If proven, this feature eases prototyping, particularly for teams of engineers and designers who frequently switch between sketching programs, CAD software, and export tools.

2. It Expands Gemini’s Practical Utility

Google has always positioned Gemini as a useful tool for research and professional use. This update reinforces the positioning.

Instead of being solely chat-based, Gemini 3 Deep Think is developing into a tool for workflow that can:

- Code-driven modeling

- Structural analysis

- Design-to-object generation

For hardware startups, robotics researchers, or mechanical engineers, this may reduce simulation time.

3. Premium AI Differentiation

The feature is available only to Google AI Ultra subscribers, a trend that continues among AI providers, who block advanced capabilities from premium levels.

Competitors have also adopted identical strategies

- OpenAI’s ability to access advanced thinking capabilities and tools.

- Anthropic’s tiered model access

- Business-oriented AI services across different providers

Restricting 3D fabrication capabilities to paid tiers suggests Google has strong demand for professionals in engineering and research.

Technical Implications

Although Google hasn’t publicly explained the changes to its architecture that are behind this update, conversion from sketch to 3D usually includes:

- Image interpretation using Multimodal Vision Models

- Contraction of geometric and contours

- The reconstruction of the 3D topology

- Generating files (commonly STL, OBJ, or similar formats)

When Deep Think handles this internally without CAD tools, it suggests an important integration between Gemini’s vision analysis and geometric modeling capabilities.

It is also a sign that Google has invested in structured output creation rather than only generative creativity.

But without any publicly available benchmarks or technical documentation, it is not clear:

- How accurately can complex shapes be?

- Whether a parametric control option is accessible

- What formats of files are supported?

- How does it handle unclear sketches?

As long as users do not test the technology extensively, claims about performance are mainly advertising.

Industry Perspective

This shift puts Google closer to an expanding class of AI tools designed to automate design.

Many startup companies, as well as AI labs, are investigating AI-assisted CAD, generative industrial design, and automated part optimization. However, they all require specific software environments.

By integrating 3D printing in Gemini, Google is collapsing:

Sketch – Model – Export

into a single AI interface.

This could be appealing to:

- Research labs

- University engineering departments

- Robotics teams

- Rapid prototyping environments

- Maker communities

When the technology is reliable, it will reduce the CAD overhead in the early stages.

Yet, high-end mechanical design typically requires exact tolerances, simulation validation, and compliance checks – areas in which AI tools need to demonstrate their reliability before replacing conventional CAD pipelines.

Competitive Landscape

The AI reasoning race has gotten more intense in the last year, as companies compete not just on language benchmarks but also on applications of logic.

OpenAI and Anthropic have focused on reasoning depth and security alignment. Google, with its Gemini 3 Deep Think, seems to be focusing on applied technological workflows.

It could reflect the broader direction of Google DeepMind’s research, which combines multimodal reasoning with practical application.

It also supports the notion that AI models are moving away from chatbot performance alone and towards capabilities specific to a particular domain.

Who Is Affected?

Immediate beneficiaries:

- Google AI Ultra subscribers

- Engineering teams using Gemini

- Researchers in the field of computational modeling

- The focus of a hardware-focused startup

Potential longer-term impact:

- CAD software vendors

- Rapid prototyping firms

- 3D printing ecosystems

As AI tools increasingly automate geometric modeling, traditional design software may need to integrate AI more to remain competitive.

What Happens Next?

Key questions now include:

- Will Google extend this feature to Ultra customers?

- Will enterprise customers receive more fabrication equipment?

- Does Deep Think integrate simulation testing or even physics validation?

If sketch-to-3D is stable and precise, it may develop into:

- Designers with full parametric capabilities

- Automated part optimization

- Physics-aware modeling

This position makes Gemini more than just a reasoning engine; it could also serve as an infrastructure layer.

The release remains a premium feature targeted at high-value professional users.

My Final Thought

This Gemini 3 Deep Think upgrade marks a significant milestone in Google’s quest to transform AI beyond mere reasoning into practical applications. By converting sketches into 3D prints, Google is testing the line between physical and digital manufacturing.

Whether the feature can be transformative will depend on its reliability, accuracy, and the depth of integration. Strategically, it indicates the direction that advanced AI is headed: towards tools that don’t just think, but actually build.

Also Read –